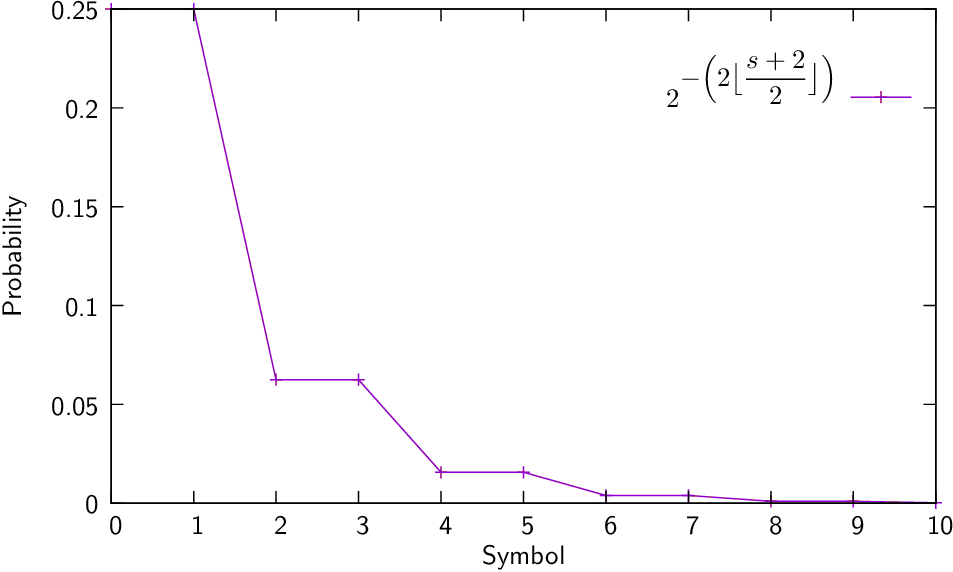

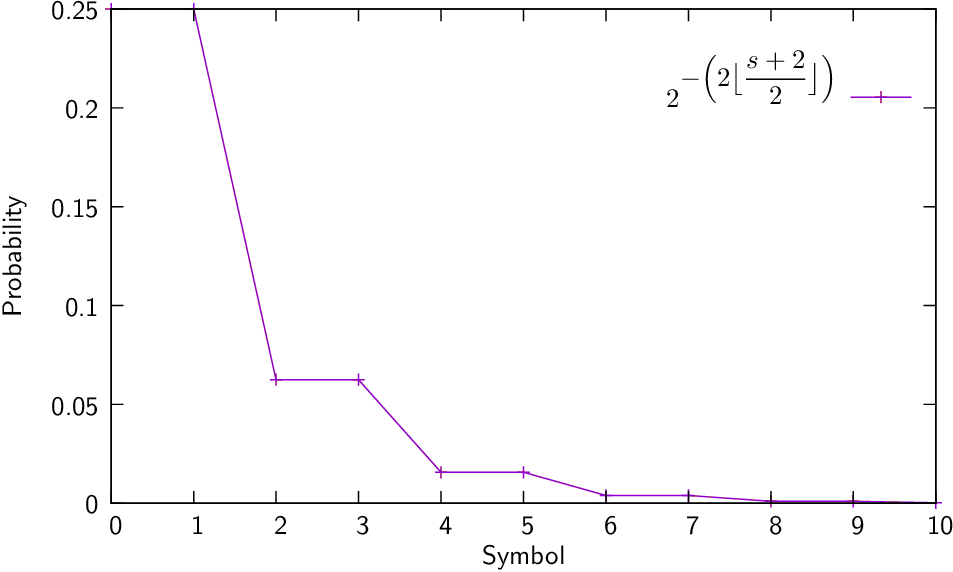

| (Eq:Golomb) |

where is the symbol and is the “Golomb slope” of the distribution.

| (Eq:Rice) |

| Golomb | |||||||||

| Rice | |||||||||

| 0 | 00 | 00 | 000 | 000 | 000 | 000 | 0000 | ||

| 1 | 10 | 01 | 010 | 001 | 001 | 001 | 0010 | 0001 | |

| 2 | 110 | 100 | 011 | 010 | 010 | 0100 | 0011 | 0010 | |

| 3 | 1110 | 101 | 100 | 011 | 0110 | 0101 | 0100 | 0011 | |

| 4 | 11110 | 1100 | 1010 | 1000 | 0111 | 0110 | 0101 | 0100 | |

| 5 | 1101 | 1011 | 1001 | 1000 | 0111 | 0110 | 0101 | ||

| 6 | 11100 | 1100 | 1010 | 1001 | 1000 | 0111 | 0110 | ||

| 7 | 11101 | 11010 | 1011 | 1010 | 1001 | 1000 | 0111 | ||

| 8 | 111100 | 11011 | 11000 | 10110 | 10100 | 10010 | 10000 | ||

Note: this implementation of the Golomb encoding estimates the parameter automatically.

Note: this implementation of the Rice encoding estimates the parameter automatically.